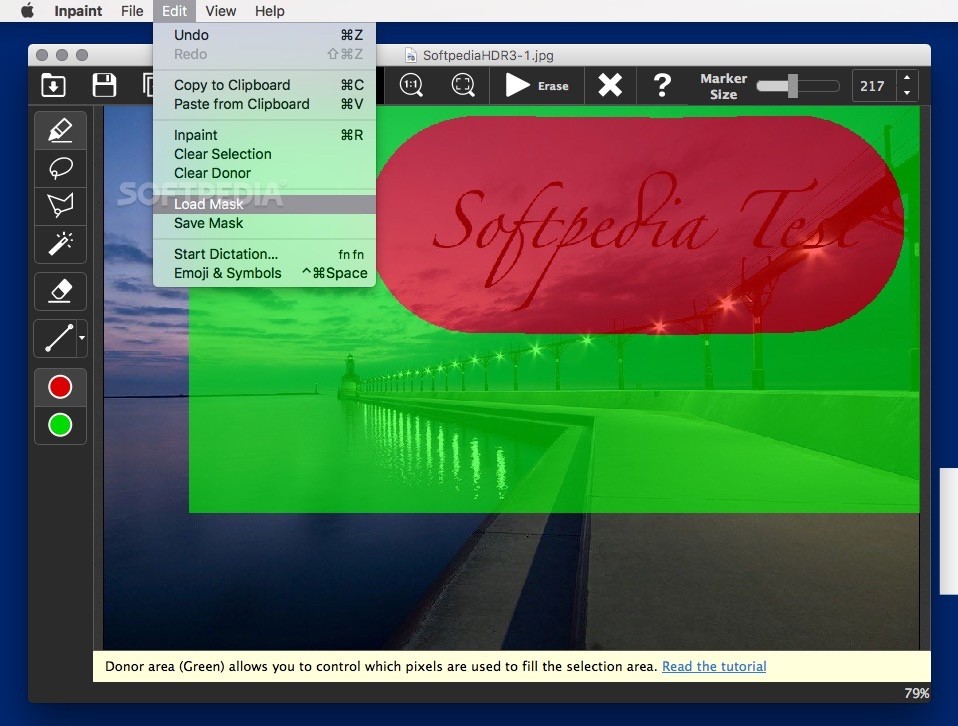

Run update-v3.2.bat to update and or install all of you needed dependencies. It should be placed in the folder ComfyUIwindowsportable which contains the ComfyUI, pythonembeded, and update folders. Context Encoders: Feature Learning by Inpainting. Copy the update-v3.2.bat file to the same directory as your ComfyUI installation. Inpaint 9.2.1 Crack + Activation Code Download By crack4windows TeoreX unknown unknown Multimedia 21434 9. Paris Street-View Results StreetView Inpainting Ĭitation Deepak Pathak, Philipp Krahenbuhl, Jeff Donahue, Trevor Darrell and Alexei A. Results are shown on images from ILSVRC'12 validation set.ġ.2M-Imagenet Trained (110 epochs) or or ġ00K-Imagenet Trained (500 epochs) or or However, the list of exact 100K image ids is available for download below. This 100K set was chosen at random, and we believe results shouldn't change if this set is chosen truly randomly. 100K-Imagenet model was trained on a random subset of 100K-images from ILSVRC'12 for 500 epochs spanned over 6 days. 1.2M-Imagenet model was trained on complete 1.2M image-set of ILSVRC'12 for 110 epochs on Titan-X GPU and took one month to train.

Browser images appear on a rolling basis so you can still see results while full file is being loaded.Īll the models were trained completely from scratch. Center half region is inpainted by our method. These are Context Encoder results on random crops of "held-out" images. Furthermore, context encoders can be used for semantic inpainting tasks, either stand-alone or as initialization for non-parametric methods.ĭemo and Source Code Inpainting Code įeatures Caffemodel We quantitatively demonstrate the effectiveness of our learned features for CNN pre-training on classification, detection, and segmentation tasks. We found that a context encoder learns a representation that captures not just appearance but also the semantics of visual structures. The latter produces much sharper results because it can better handle multiple modes in the output. When training context encoders, we have experimented with both a standard pixel-wise reconstruction loss, as well as a reconstruction plus an adversarial loss. Resize and fill: This will add in new noise to pad your image to 512x512, then scale to 1024x1024, with the expectation that img2img will. Aspect ratio is kept but a little data on the left and right is lost. Crop and resize: This will crop your image to 500x500, THEN scale to 1024x1024. In order to succeed at this task, context encoders need to both understand the content of the entire image, as well as produce a plausible hypothesis for the missing part(s). Forget the aspect ratio and just stretch the image. By analogy with auto-encoders, we propose Context Encoders - a convolutional neural network trained to generate the contents of an arbitrary image region conditioned on its surroundings. We present an unsupervised visual feature learning algorithm driven by context-based pixel prediction. Semantic Inpainting results on held-out images by Context Encoder. Context Encoders: Feature Learning by Inpainting

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed